It is sometimes convenient to specify the component setup of a model in Blender instead of Unity3D, to hide implementation details and to make the life of artists and designers easier. This is for example useful to specify invisible hits, or areas that can’t be navigated by AI. The easiest way to do that is to use custom properties in Blender.

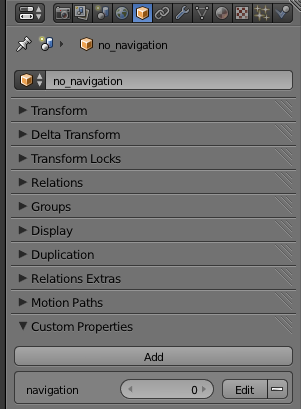

Blender allows to add custom string, float or integer properties to an object. The custom properties that are attached to meshes, materials or textures are stored separately, and are currently not picked up by Unity3D.

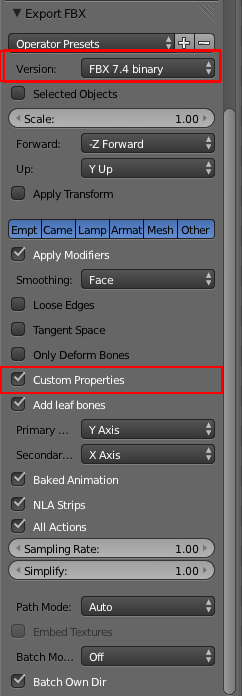

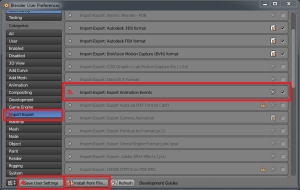

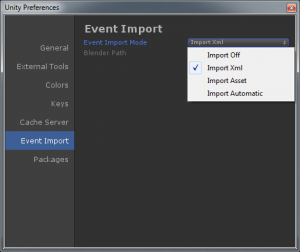

The new binary FBX exporter (from Blender version 2.71+) can write these values to a FBX file, which Unity3D can pick up during import. The automatic Blender importer in Unity3D still uses the old Ascii exporter, so you need to either export files manually, or modify the Unity3D import script to select the new exporter. I think it is a good idea to get the latest FBX exporter scripts from the nightly build, to get many fixes over the version released in 2.71.

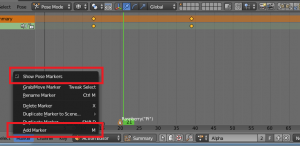

The new binary exporter needs to be selected, and “custom properties” needs to be enabled. I modified my Blender and Unity3D scripts to always use the new exporter and have “custom properties” enabled when models are imported.

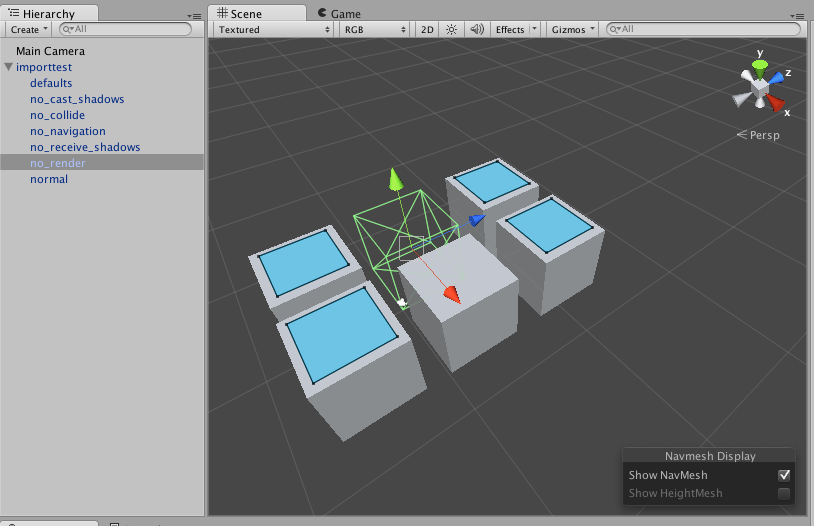

On the Unity3D side they are received with AssetPostprocessor.OnPostprocessGameObjectWithUserProperties. It is a good idea to start with the example script to see if the custom properties are coming across.

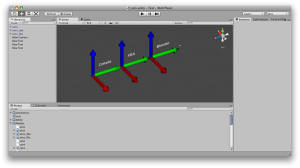

I am using several custom properties currently:

- static: mark GameObject as static, using GameObjectUtility.SetStaticEditorFlags

- navigation: set or clear the StaticEditorFlags.NavigationStatic flag on a GameObject

- render: delete the MeshFilter/MeshRenderer/SkinnedMeshRenderer if set to 0

- collision: delete the MeshCollider if set to 0

- receiveshadows: set or clear the receiveShadows flag on a MeshRenderer or SkinnedMeshRenderer

- castshadows: set or clear the castShadows flag on a MeshRenderer or SkinnedMeshRenderer

It is of course possible to add more game specific components to a GameObject. It seems to be safe to delete components, though deleting GameObjects causes an error. I just mark those objects as EditorOnly.

My AssetPostprocessor script is probably a bit overkill, because it supports setting file-global defaults in an Empty called “defaults” in Blender. If you hardwire the defaults you may be able to just process the properties once in OnPostprocessGameObjectWithUserProperties. That function is called once for each Blender node that has custom properties. To process all GameObject nodes you need to handle OnPostprocessModel as well.

using UnityEngine;

using UnityEditor;

using System.Collections.Generic;

// automatically configure game objects imported from a model

public class SetupGameObject : AssetPostprocessor {

Dictionary<string, object> defaults = new Dictionary<string, object> ();

Dictionary<GameObject, Dictionary<string, object>> settings = new Dictionary<GameObject, Dictionary<string, object>>();

private bool GetProperty(GameObject go, string key, bool defaultValue) {

Dictionary<string, object> objectSettings = null;

if (settings.TryGetValue (go, out objectSettings)) {

object o = null;

if (objectSettings.TryGetValue(key, out o)) {

return (int)o != 0;

}

}

object os = null;

if (defaults.TryGetValue (key, out os)) {

return (int)os != 0;

}

return defaultValue;

}

private void ApplySettings(GameObject go) {

bool castShadows = GetProperty (go, "castshadows", true);

bool receiveShadows = GetProperty(go, "receiveshadows", true);

bool generateCollider = GetProperty(go, "collision", true);

bool isStatic = GetProperty(go, "static", false);

bool renderMesh = GetProperty (go, "render", true);

bool navigation = GetProperty (go, "navigation", true);

if (isStatic) {

GameObjectUtility.SetStaticEditorFlags (go, StaticEditorFlags.LightmapStatic | StaticEditorFlags.OccluderStatic | StaticEditorFlags.OccludeeStatic | StaticEditorFlags.BatchingStatic | StaticEditorFlags.NavigationStatic | StaticEditorFlags.OffMeshLinkGeneration);

} else {

GameObjectUtility.SetStaticEditorFlags (go, 0);

}

if (navigation) {

GameObjectUtility.SetStaticEditorFlags (go, GameObjectUtility.GetStaticEditorFlags (go) | StaticEditorFlags.NavigationStatic);

} else {

GameObjectUtility.SetStaticEditorFlags (go, GameObjectUtility.GetStaticEditorFlags (go) & ~StaticEditorFlags.NavigationStatic);

}

MeshRenderer meshRenderer = go.GetComponent<MeshRenderer>();

MeshFilter meshFilter = go.GetComponent<MeshFilter> ();

SkinnedMeshRenderer skinnedMeshRenderer = go.GetComponent<SkinnedMeshRenderer> ();

if (renderMesh) {

if (meshRenderer) {

meshRenderer.castShadows = castShadows;

meshRenderer.receiveShadows = receiveShadows;

}

if (skinnedMeshRenderer) {

skinnedMeshRenderer.castShadows = castShadows;

skinnedMeshRenderer.receiveShadows = receiveShadows;

}

} else {

if (meshRenderer) {

Object.DestroyImmediate(meshRenderer);

}

if (meshFilter) {

Object.DestroyImmediate(meshFilter);

}

if (skinnedMeshRenderer) {

Object.DestroyImmediate(skinnedMeshRenderer);

}

}

MeshCollider meshCollider = go.GetComponent<MeshCollider>();

if (!generateCollider) {

if(meshCollider) {

Object.DestroyImmediate(meshCollider);

}

}

}

private void ApplyToChildren (GameObject go) {

// apply settings to all gameobjects

ApplySettings(go);

foreach (Transform t in go.transform) {

ApplyToChildren (t.gameObject);

}

}

// this is called once by Unit3D after OnPostprocessGameObjectWithUserProperties

void OnPostprocessModel (GameObject go) {

ApplyToChildren (go);

}

// this is only called by Unity3D for game objects with properties

void OnPostprocessGameObjectWithUserProperties(GameObject go, string[] properties, object[] values) {

bool isDefault = go.name.ToLower () == "defaults";

if (isDefault) {

// I can't seem to delete this without errors, so let's just mark it "Editor only"

go.tag = "EditorOnly";

}

Dictionary<string, object> newDict = new Dictionary<string, object> ();

for (int i=0; i<properties.Length; i++) {

string prop = properties[i].ToLower();

object val = values[i];

if (isDefault) {

defaults[prop] = val;

}

newDict[prop] = val;

}

settings [go] = newDict;

}

}